Thank you for your patience with me in getting this series done! As noted in my previous article, work and life has been a bit busy. I am trying to post more over on Notes, and now that Substack has a notion of “Following” similar to X/Twitter, it might be worth considering as a way to see useful content. (I don’t plan to turn Notes into a clone of what I post on X/Twitter, though.) And for what it’s worth, I’ve found the Substack app pretty nice to use as a reader myself.

Note: This article is part 4 in a series. You can find the inaugural article here.

What is a Quantum Neural Network?

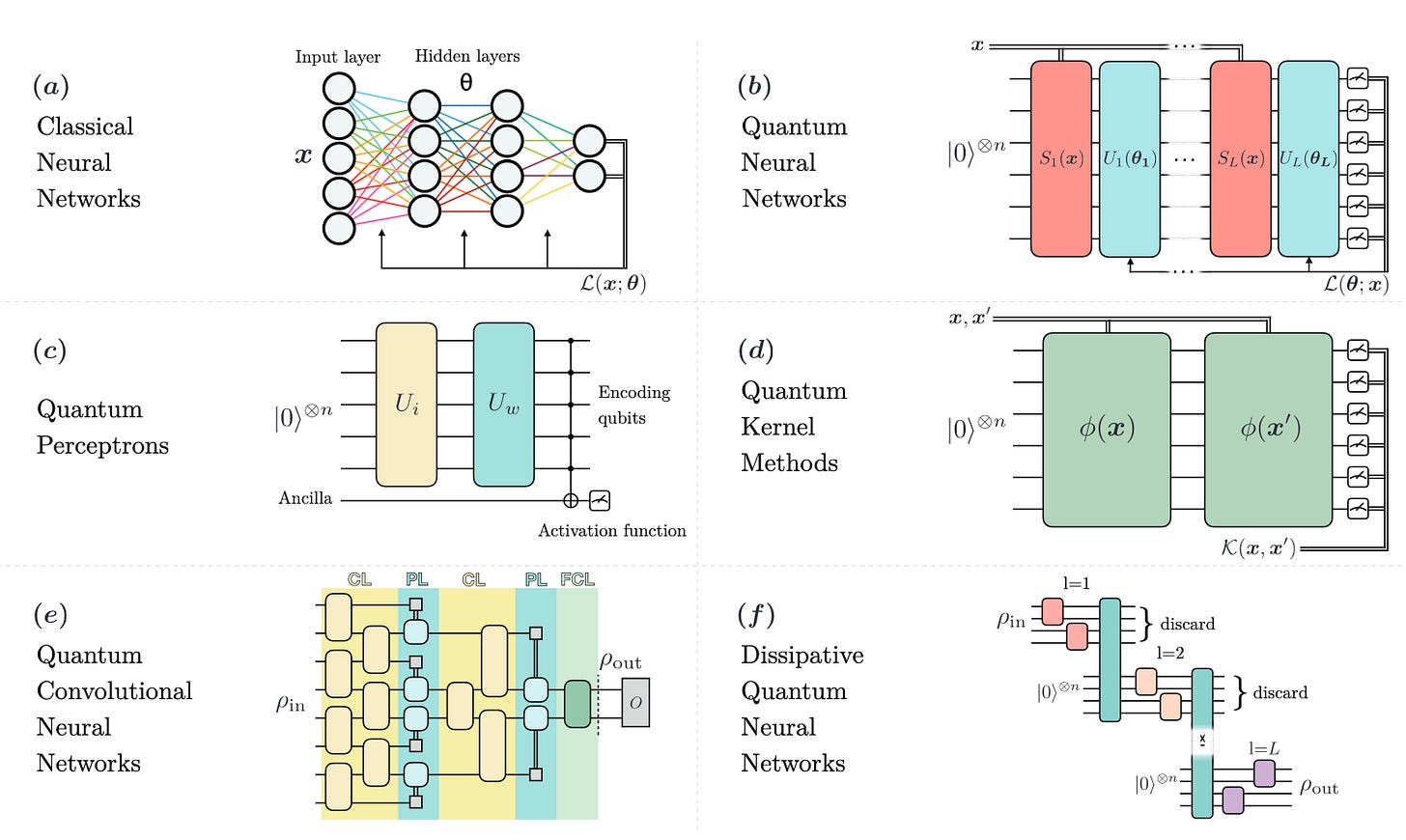

Having discussed the topic of quantum kernels in two prior articles, we turn next to quantum neural networks (QNNs). This topic is actually quite broad, as quantum analogues have been proposed for the classical neuron, convolutional neural networks, and recurrent neural networks. A survey of these is available via Quantum computing models for artificial neural networks, and is summarized in the image below.

As you can see from the image, there are many different ways to quantize a classical NN (sub-figure a). Though, note that (d) is quantum kernels and as such is not a QNN. In addition, for (b), recall that if the number of repetitions of the circuit is 1 (i.e., data encoding followed by a variational circuit), then from Supervised quantum machine learning models are kernel methods, we know those models are equivalent to quantum kernels.

One of the authors of Quantum computing models for artificial neural networks did a lecture in the 2021 Qiskit Global Summer school on this topic; watch Introduction & Applications of Quantum Models (lecture notes are here.) This lecture provides an overview of these models. A few items worth noting:

Quantum perceptrons are one of the oldest-such models. One of the main challenges in defining them was figuring out how to get a nonlinear function from operations acting on quantum states, which are inherently linear on the quantum state they act on.

Quantum neural networks based on multiple layers of data encoding and tunable parameterized circuits are universal function approximators. In terms of a Fourier series analysis, the frequencies of that decomposition depend on the encoding circuit.

Quantum convolutional neural networks have the advantage that the number of parameters required to describe the model scales logarithmically with the number of qubits. In turn, these models are actually quite trainable, both because the number of parameters is smaller than other QNN types, and also because these models do not exhibit the “barren plateau” phenomenon (discussed below).

How are quantum neural networks trained?

Like quantum kernels, QNNs utilize parameterized quantum circuits. Training a QNN is analogous to training a CNN in that it is necessary to update the parameters of the model according to some cost function. Usually, this involves calculating or estimating gradients.

For an overview of calculating gradients, watch QHack 2021: Christa Zoufal—Quantum Gradients. In addition, a common method is to use analytic gradients, based on parameter-shift rules. The rules describe how gradients of a circuit can be computed by evaluating the model at different parameter values, and then taking differences. (Lest you think this is just using a finite-difference method, the key feature with analytic gradients & the parameter-shift rule is that you aren’t making any numerical approximations: the gradients are (in the ideal case) exact.) Watch Automatic Differentiation of Quantum Circuits and QHack 2022: Cody Wang – General parameter-shift rules for more details.

In addition, it is possible to use a backpropagation-like method to train these models. With this method, the number of times the cost function needs to be evaluated to compute gradients can be reduced. See the video Amira Abbas: On quantum backpropagation, information reuse and cheating measurement collapse (paper here).

Quantum models can become untrainable due to barren plateaus, meaning the cost function landscape becomes flatter and flatter as the number of qubits in the model acts on increases. Originally, the barren plateau phenomenon was identified for models where the cost function depended on the measurement outcomes of all the qubits in the model, and where the circuit for the model was deep.

However, recent work (Cost function dependent barren plateaus in shallow parametrized quantum circuits) has extended the analysis of barren plateaus to shallower circuits, and found that local cost functions (i.e., ones which depend on measurement outcomes from a small number of qubits) and shallow circuits do not exhibit these plateaus (at least, in the absence of noise).

A video from one of the authors of this work is available at Q2B 2020 | Cost-Function-Dependent Barren Plateaus in Shallow Quantum Neural Networks. It turns out that the presence of noise in the gates also induces a barren plateau; more specifically, if the depth of the model grows linearly with the number of qubits. See the video (QuAlg) Samson Wang: Noise-Induced Barren Plateaus in Variational Quantum Algorithms for more details.

The nature of these plateaus is different, though: you can think of the cost function landscape as becoming more and more “blurred” due to the noise, meaning it becomes harder and harder to resolve the actual cost function values at distinct parameter points. This is in contrast with noise-free barren plateaus: the actual cost function at distinct parameter points can be easily resolved, but the range of parameter values across which the cost function varies gets smaller and smaller.

A summary of these ideas is presented in the 2021 Qiskit Global Summer School video Lecture 8.2 - Barren Plateaus, Trainability Issues, and How to Avoid Them (lecture notes here).

Looking at the image above, and comparing with the image of various kinds of QNNs, you can start to see why quantum convolutional neural networks might be trainable – the circuit depth grows slowly with increasing the number of qubits, and the output of the model comes from measuring a small number of qubits. Indeed, as discussed in the video above, quantum CNNs do not have barren plateaus.

Finally, the video Subtleties in the trainability of quantum machine learning models further discusses how features of the model such as global measurements, deep circuits, highly-entangling circuits can induce barren plateaus, as well as how the data set itself used to train the model can induce them.

Generalization Capability of Quantum Neural Networks

Beyond being able to train quantum models, one big topic is proving statements about the model’s ability to generalize on as-yet-unseen data. It is known that quantum models can generalize well: in order to achieve a given generalization error e, the number of data points which need to be trained on scales at most as T*log(T)/e^2, where T is the number of parameters in the model. (Watch QIQT23 | Lukasz Cincio - Generalization in quantum machine learning from few training data for details.)

In addition, researchers have been defining various measures of the capacity or power of quantum models, and relating those to generalization error. Watch the 2021 Qiskit Global Summer School video Lecture 10.2 - The Capacity and Power of Quantum Machine Learning Models for an overview of various ideas proposed in the literature. (Lecture notes are here.) In addition, the video QML Meetup: Dr David Sutter (IBM Research, Zurich), The power of quantum neural networks for a deep-dive on one interesting measure discussed in the previous video (namely, the effective dimension). Two papers on this measure are Effective dimension of machine learning models & The power of quantum neural networks.

Examples of Projects using QNNs

A few examples of projects which use QNNs in one way or another include:

Quantum data learning for quantum simulations in high-energy physics uses QNNs to predict quantum phases of ground states of a particular physics model. What’s interesting about this paper is that it blends both QML and quantum simulation techniques (see Figure 1).

Quantum Methods for Neural Networks and Application to Medical Image Classification (which itself builds on prior works by the authors) introduces a family of data loading circuits with analogs to classical NNs, as well as a training method for them. These QNNs are applied in the context of standard medical image data sets.

Quantum Neural Networks for a Supply Chain Logistics Application uses data loading circuits similar to those above, but the model is based on the paradigm of reinforcement learning. These QNNs are applied in the context of identifying good shipping routes for a supply chain logistics problem.

Wrap-up

In this article, we took a look at quantum neural networks (QNNs), and saw how various analogues of classical NNs have been defined in the literature. Barren plateaus are the bane of QNNs, rendering them untrainable. For some models and data sets, these plateaus do not occur, making them better candidates for actual use in practice. Quantum models have favorable generalization properties, and may offer some advantages in terms of being able to achieve a given level of performance using less data than purely-classical models.

How are you enjoying this series? Leave a comment and let me know!